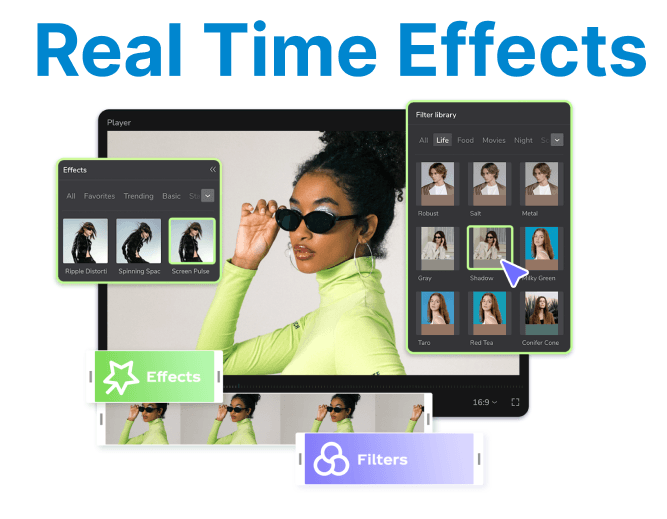

CUDA enables GPU execution of pixel-wise operations required for applying real-time video effects and filters. These operations include color grading, blurring, edge detection, sharpening, and dynamic overlays. By executing effects directly on the GPU, latency is minimized for making this ideal for live video pipelines and interactive applications.

Frame Buffer Allocation on GPU

Each frame is treated as a 2D matrix of pixels. Real-time systems require persistent memory allocation to avoid repeated cudaMalloc() overhead.

Example: Allocate RGB Frame on Device

uint8_t* d_rgb_frame;size_t pitch;cudaMallocPitch(&d_rgb_frame, &pitch, width * 3, height); // 3 channels (RGB)- Pitch ensures alignment for coalesced memory access.

- Pitch value should be used in place of row stride in custom kernels.

- Works well with video frames decoded using NVDEC in NV12 or RGB format.

Color Inversion Filter

A simple pixel-wise operation that inverts each color component by subtracting 255. Operates independently on each pixel for parallel execution.

CUDA Kernel

A CUDA kernel is a GPU function marked with __global__ that executes concurrently across many threads. Each thread typically processes a single pixel or pixel component in a video frame.

Kernels are designed to maximize parallelism and are responsible for implementing pixel-level logic such as color inversion, blurring, or brightness adjustments. Efficient memory access and thread independence are critical to achieving high performance.

__global__ void invert_color(uint8_t* frame, int width, int height) {

int x = blockIdx.x * blockDim.x + threadIdx.x;

int y = blockIdx.y * blockDim.y + threadIdx.y;

if (x >= width || y >= height) return;

int idx = (y * width + x) * 3;

frame[idx] = 255 - frame[idx]; // R

frame[idx + 1] = 255 - frame[idx + 1]; // G

frame[idx + 2] = 255 - frame[idx + 2]; // B

}Launch Configuration

Defines how threads are organized and dispatched on the GPU. The dim3 constructs specify the number of threads per block and the number of blocks per grid, typically mapped to the 2D dimensions of a video frame.

The configuration ensures that every pixel is processed by at least one thread and that memory access is aligned for maximum throughput. Proper launch sizing avoids underutilization and aligns thread blocks with warp boundaries for optimal GPU occupancy.

dim3 threads(16, 16);

dim3 blocks((width + 15) / 16, (height + 15) / 16);

invert_color<<<blocks, threads>>>(d_rgb_frame, width, height);- Use 16x16 threads per block for warp-aligned access.

- For 4K and larger frames, larger grids are needed to avoid thread underutilization.

Box Blur Filter (3x3 Kernel)

Box blur computes the average of each pixel"s 3??3 neighborhood. It's a separable convolution but implemented here as a na??ve full kernel for clarity.

CUDA Kernel

__global__ void box_blur(uint8_t* input, uint8_t* output, int width, int height) {

int x = blockIdx.x * blockDim.x + threadIdx.x;

int y = blockIdx.y * blockDim.y + threadIdx.y;

if (x < 1 || y < 1 || x >= width - 1 || y >= height - 1) return;

int idx = (y * width + x) * 3;

for (int c = 0; c < 3; ++c) {

int sum = 0;

for (int j = -1; j <= 1; ++j)

for (int i = -1; i <= 1; ++i)

sum += input[((y + j) * width + (x + i)) * 3 + c];

output[idx + c] = sum / 9;

}

}- Replace with a separable filter (horizontal + vertical) for better performance.

- Use shared memory to avoid global memory re-access per neighbor.

Gamma Correction

Applies non-linear luminance adjustment to each pixel based on gamma. Affects brightness and contrast in a perceptually accurate way.

CUDA Kernel

__global__ void gamma_correct(uint8_t* frame, float gamma, int width, int height) {

int x = blockIdx.x * blockDim.x + threadIdx.x;

int y = blockIdx.y * blockDim.y + threadIdx.y;

if (x >= width || y >= height) return;

int idx = (y * width + x) * 3;

for (int c = 0; c < 3; ++c) {

float normalized = frame[idx + c] / 255.0f;

normalized = powf(normalized, gamma);

frame[idx + c] = __saturatef(normalized) * 255.0f;

}

}- Use __saturatef() to clamp values to [0, 1] range.

- Optionally precompute LUTs for fixed gamma values to improve performance.

CUDA Streams for Async Processing

Use CUDA streams to overlap the execution of multiple filters or memory transfers with kernel launches. This is crucial for pipelining video frames in real-time.

cudaStream_t stream;

cudaStreamCreate(&stream);

// Launch kernels in a stream

invert_color<<<blocks, threads, 0, stream>>>(d_rgb_frame, width, height);

box_blur<<<blocks, threads, 0, stream>>>(d_input, d_output, width, height);

// Overlap memory transfers (if needed)

cudaMemcpyAsync(..., cudaMemcpyHostToDevice, stream);

cudaStreamSynchronize(stream); // Wait for completion- Multiple streams can be used for multi-stage frame processing.

- Stream priority and concurrency tuning may be necessary for multi-frame batching.