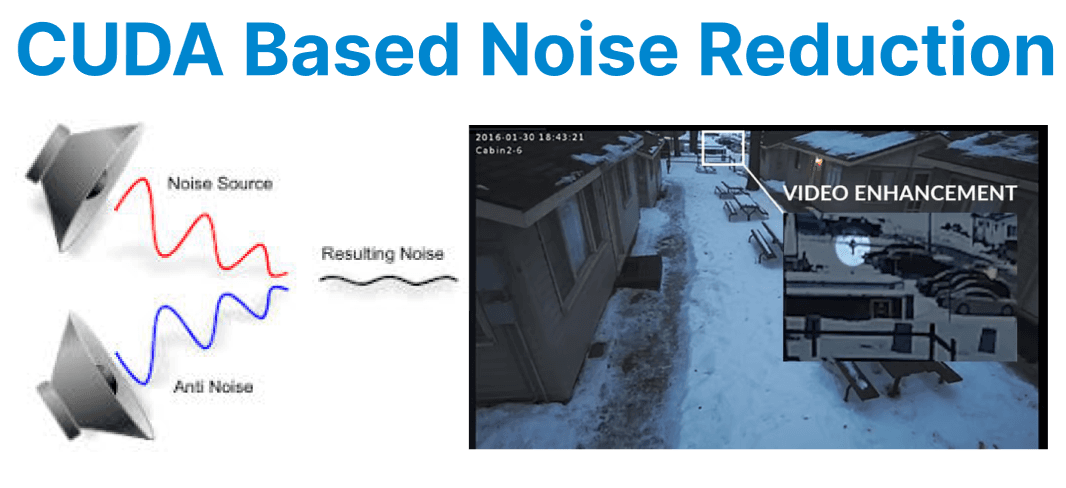

CUDA-based noise reduction & video enhancement techniques leverage the parallel computing power of NVIDIA GPUs to process video frames with high efficiency and minimal latency. These techniques are critical in pipelines where large volumes of video data must be cleaned, stabilized, or sharpened in real-time. Common operations include spatial and temporal denoising, contrast enhancement, edge-preserving smoothing, and sharpening.

Frame Buffer Management

CUDA processes video frames as 2D arrays in device memory, typically using YUV420p or NV12 formats for compatibility with video codecs. The luma (Y) plane is prioritized for noise reduction due to its visual importance. Memory allocation with cudaMallocPitch ensures proper alignment for efficient, coalesced access. Proper frame buffer management reduces memory bottlenecks and improves kernel performance.

uint8_t* d_luma;

cudaMallocPitch(&d_luma, &pitch, width, height);

- d_luma: Pointer to GPU memory for storing the luma (Y) part of the video frame.

- cudaMallocPitch: Allocates 2D memory with proper alignment for faster access.

Using cudaMallocPitch ensures better performance for reading/writing video frames.

Spatial Noise Reduction (Median Filter)

A median filter replaces each pixel with the median value of its 3??3 neighborhood to remove impulse noise while preserving edges. CUDA implementations use shared memory to load local tiles, reducing global memory accesses. Median filtering is effective for removing isolated noise artifacts in video frames.

__global__ void median_filter(uint8_t* input, uint8_t* output, int width, int height) {

int x = blockIdx.x * blockDim.x + threadIdx.x;

int y = blockIdx.y * blockDim.y + threadIdx.y;

if (x >= 1 && x < width - 1 && y >= 1 && y < height - 1) {

uint8_t window[9];

int idx = 0;

for (int j = -1; j <= 1; ++j)

for (int i = -1; i <= 1; ++i)

window[idx++] = input[(y + j) * width + (x + i)];

// Bubble sort for median

for (int i = 0; i < 9; ++i)

for (int j = i + 1; j < 9; ++j)

if (window[i] > window[j])

swap(window[i], window[j]);

output[y * width + x] = window[4];

}

}

Explanation:

- Applies a 3??3 median filter to each pixel (excluding edges).

- window[9] stores pixel values from the neighborhood.

- Bubble sort is used to find the median value.

- The median replaces the center pixel in the output to reduce noise.

- Thread (x, y) maps to one pixel location in the frame.

Bilateral Filter for Edge-Preserving Denoising

A bilateral filter smooths homogeneous regions while preserving edges using a weighted Gaussian function based on both spatial distance and intensity difference.

This filter is typically implemented using shared memory for local tiles and requires tuning of spatial (??s) and intensity (??r) weights.

// Pseudocode structure

__global__ void bilateral_filter(uint8_t* input, uint8_t* output, ...) {

// Load shared tile, compute weights, apply normalized convolution

}

Explanation:

- Each thread processes one pixel using a bilateral filter.

- Shared memory is used to load a tile of the image for fast access.

- The kernel computes spatial and intensity weights to preserve edges.

- A weighted sum is applied to nearby pixels to smooth noise without blurring edges.

- The final result is written to the corresponding location in the output.

Unsharp Masking for Sharpness Enhancement

Unsharp masking enhances sharpness by subtracting a blurred image from the original and adding a scaled difference back. CUDA enables fast parallel processing using separable Gaussian blurs. Adaptive sharpening adjusts the enhancement based on local contrast to avoid artifacts.

__global__ void unsharp_mask(uint8_t* input, uint8_t* blurred, uint8_t* output, int width, int height, float amount) {

int idx = blockIdx.x * blockDim.x + threadIdx.x;

if (idx < width * height) {

int diff = input[idx] - blurred[idx];

int enhanced = input[idx] + amount * diff;

output[idx] = min(255, max(0, enhanced));

}

}

Explanation:

- Each thread enhances one pixel by subtracting the blurred version from the original.

- The difference (diff) is scaled and added back to sharpen the image.

- Pixel values are clamped to [0, 255] to prevent overflow or underflow.

- The result is stored in the output frame, producing a sharpened image.

Temporal Filtering (Frame-to-Frame Denoising)

Temporal denoising reduces flicker by averaging pixels across consecutive frames, often using motion estimation to align moving objects. CUDA accelerates these operations with parallel processing and optical flow calculations. This smooths noise in static regions while preserving motion details. Proper motion handling prevents ghosting artifacts.

__global__ void temporal_filter(uint8_t* prev, uint8_t* curr, uint8_t* output, int width, int height) {

int idx = blockIdx.x * blockDim.x + threadIdx.x;

if (idx < width * height) {

output[idx] = (3 * curr[idx] + prev[idx]) / 4; // Weighted average

}

}

Explanation:

- Each thread processes one pixel by blending the current and previous frames.

- A weighted average (75% current + 25% previous) is used to reduce flicker and noise.

- The result is written to the output buffer, producing a temporally smoothed frame.

Performance Optimization Techniques

- Use shared memory for neighborhood-based filters (e.g., median, bilateral).

- Launch kernels with warp-aligned thread blocks (16??16).

- Apply cudaMemcpyAsync() with pinned memory to reduce latency.

- Tune grid/block sizes for occupancy and memory throughput.

Output Encoding and Integration

Enhanced frames are encoded using hardware encoders like NVENC, often integrated with FFmpeg for automated processing. CUDA enables zero-copy transfers between enhancement and encoding stages, minimizing CPU involvement. This GPU-based pipeline maximizes throughput and supports real-time video streaming or storage. Efficient integration is critical for high-performance video applications.

ffmpeg -f rawvideo -pix_fmt yuv420p -s 1920x1080 -i - -c:v h264_nvenc output.mp4Explanation:

- -f rawvideo: Raw input stream

- -pix_fmt yuv420p: Expected pixel format

- -i -: Reads from stdin

- -c:v h264_nvenc: Encodes with NVENC

- output.mp4: Final video file